A bot called Aristotle: Can maths help AI chatbots improve accuracy

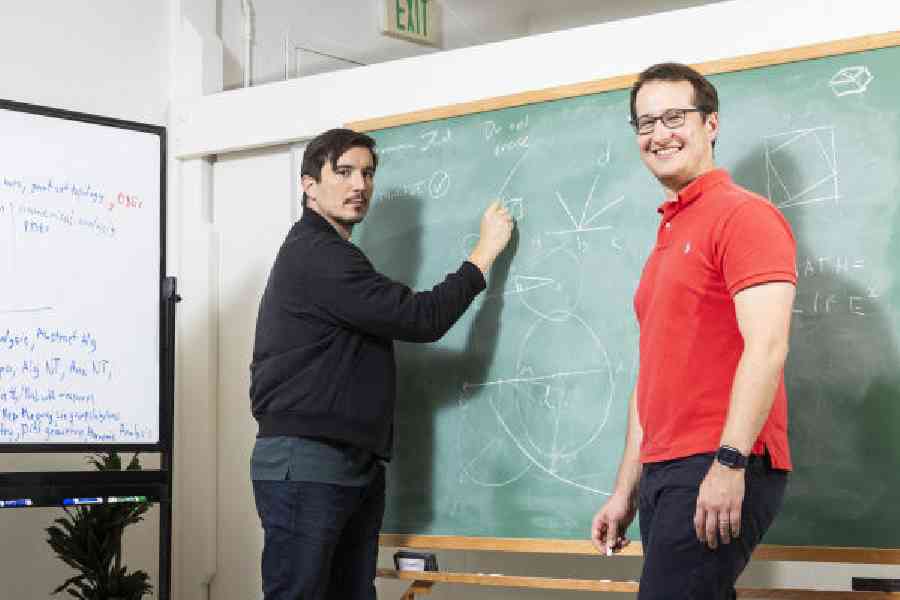

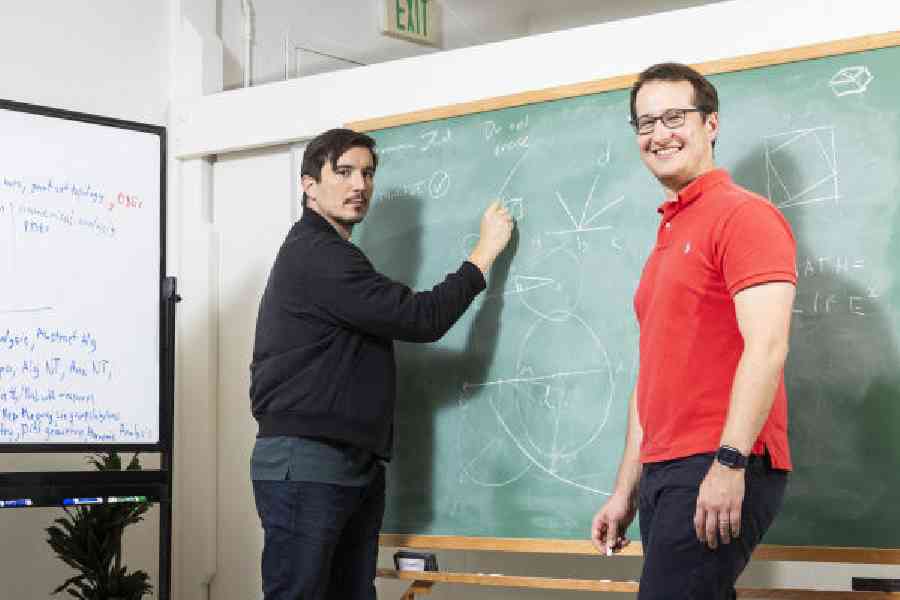

On a recent afternoon, Tudor Achim gave a brainteaser to an artificial intelligence bot that goes by the name Aristotle.

The question involved a 10-by-10 table filled with a hundred numbers. If you collected the smallest number in each row and the largest number in each column, he asked, could the largest of the small numbers ever be greater than the smallest of the large numbers?

The bot correctly answered “No”. But that was not surprising. Popular chatbots such as ChatGPT may give the right answer, too. The difference was that Aristotle had proved that its answer was right. The bot generated a detailed computer program that verified “No” was the correct response.

Chatbots including ChatGPT from OpenAI and Gemini from Google can answer questions, write poetry, summarise news articles and generate images.

But they also make mistakes that defy common sense. Sometimes, they make stuff up — a phenomenon called hallucination.

Achim, CEO and co-founder of a Silicon Valley start-up called Harmonic, is part of a growing effort to build a new kind of AI that never hallucinates. Today, this technology is focused on mathematics. But many leading researchers believe they can extend the same techniques in computer programming and other areas.

Because maths is a rigid discipline with formal ways of proving whether an answer is right or wrong, companies such as Harmonic can build AI technologies that check their own answers and learn to produce reliable information.

Google DeepMind, the tech giant’s central AI lab, recently unveiled a system called AlphaProof that operates in this way. Competing in the International Mathematical Olympiad, the premier maths competition for high-schoolers, the system achieved “silver medal” performance, solving four of the competition’s six problems. It was the first time a machine had reached that level.

“This is a path around hallucinations,” said David Silver, a principal research scientist at Google DeepMind. “Proof is a form of truth.” Using similar techniques, some researchers believe they can eventually build an AI system that is better at maths than any human. That’s the goal of Achim and his co-founder Vlad Tenev.

Others, such as Silver, believe these techniques can extend even further, leading to AI systems that can verify physical truths as well as mathematical.

Around 2017, companies including Google, Microsoft and OpenAI began building large language models. These AI systems often spent months analysing digital text culled from across the Internet, including books, Wikipedia articles and chat logs.

By pinpointing patterns in all that text, these systems learned to generate text of their own, including term papers, poetry and computer code. They could even carry on a conversation.

But the technology also seemed dopey at times. It seemed to just spit out what it had learned from the Internet — unable to verify whether the information was right or wrong, real or completely made-up.

OpenAI just unveiled a new version of ChatGPT that was designed to reason through questions. It spends time “thinking”, trying different strategies in an effort to reach the right answer. But it still gets things wrong and makes stuff up.

Researchers such as Achim are beginning to address these problems through mathematics. With maths, you can formally prove whether an answer

is right or wrong.

About a decade ago, a Microsoft researcher named Leonardo de Moura created a computer programming language specifically for proving mathematical statements. Called Lean, this programming language was originally a tool for human mathematicians. But now that AI systems are skilful enough to generate their own computer code, they can also use Lean.

Harmonic is designing a large language model that can generate its own Lean proofs. The Lean code it generates is not always perfect. But through trial and error, it can learn to verify a solution.

“It is a lot like a human,” Achim said. “If you are trying to solve a maths problem, you try certain steps. And if they fail, you try others, until you get them right.”

When Aristotle is asked to answer maths problems, it can check the answers. These might be simple questions like “What is 2+2?” Or they might be more complex brainteasers like the one with the 10-by-10 grid of numbers.

“If the system can output an answer, it is basically guaranteed to be correct,” Achim said.

As Aristotle checks its own answers, it becomes a way of generating enormous amounts of trustworthy digital data that can be used to teach AI systems. In other words, Aristotle can generate data that can be used to improve itself.

NYTNS